Unlock Better Designs: User Testing Methods Explained

In the dynamic realm of digital design and creative professions, the journey from concept to compelling user experience is rarely linear. It’s a continuous cycle of creation, evaluation, and refinement. At the heart of this process lies a critical set of practices: **user testing methods**. These systematic approaches allow designers and product teams to evaluate products, prototypes, or concepts with representative users, revealing invaluable insights that guide development and validate design decisions. By understanding how real people interact with digital products, professionals can pinpoint usability issues, gather meaningful feedback, and ensure the solutions they build are intuitive, effective, and truly user-centered.

What are User Testing Methods and Why are They Crucial for Digital Design?

User testing methods encompass a diverse array of techniques employed to collect qualitative and quantitative data about user behavior, attitudes, and perceptions regarding a digital product or service. Their fundamental purpose is to bridge the gap between design intent and actual user experience, providing an empirical basis for design choices rather than relying solely on assumptions or internal perspectives. For professionals across UI/UX design, product development, and creative workflows, integrating these methods is not merely a best practice—it’s a strategic imperative.

The importance of these design validation strategies for digital design cannot be overstated. They empower teams to:

- **Identify Usability Issues Early:** Catching problems during prototyping saves significant time and resources compared to fixing them post-launch.

- **Validate Design Hypotheses:** Confirming whether proposed solutions genuinely address user needs and pain points.

- **Understand User Behavior & Motivations:** Moving beyond surface-level interactions to grasp the underlying ‘why’ behind user actions.

- **Improve User Satisfaction & Engagement:** Creating digital experiences that are intuitive, efficient, and enjoyable leads to higher retention and loyalty.

- **Make Data-Driven Decisions:** Shifting from subjective opinions to evidence-based design choices, fostering greater confidence in product direction.

- **Reduce Development Risk:** Minimizing the likelihood of launching a product that fails to meet market demands or user expectations.

Ultimately, by systematically evaluating designs with real users, Digital Design & Creative Professions can craft more impactful, successful, and enduring digital products that genuinely resonate with their target audience. These approaches are integral to the iterative nature of modern product development, ensuring that feedback loop continuously informs and refines the user journey.

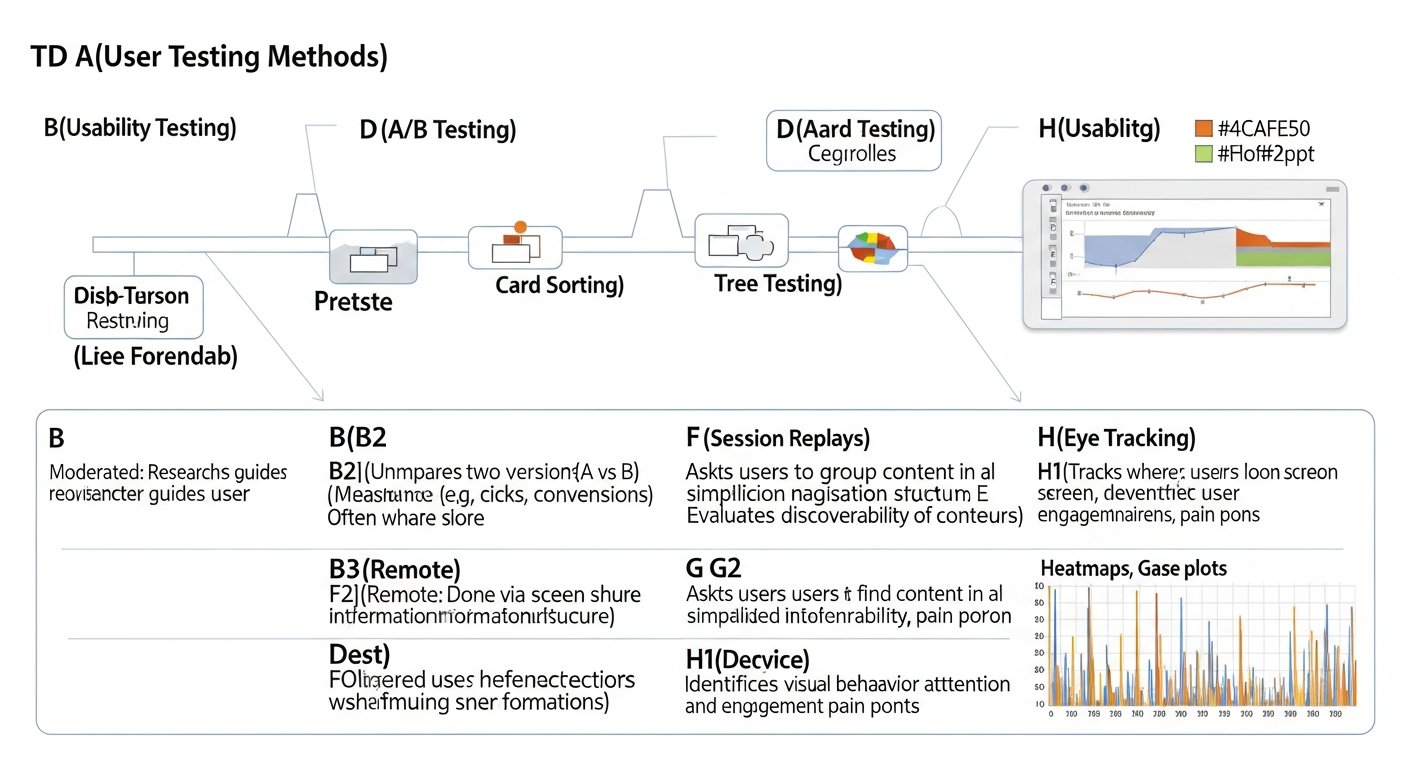

What are Key Qualitative User Testing Methods?

Understanding the diverse array of user testing methods is paramount for any digital design professional. This section delves into the foundational qualitative approaches that provide rich, in-depth insights into user behaviors and motivations. These techniques are designed to uncover the ‘why’ behind user actions, offering a nuanced understanding that quantitative data often cannot provide. They are particularly valuable in the discovery and ideation phases of product development.

What is Moderated Usability Testing?

What it is: Moderated usability testing involves a facilitator guiding a participant through a set of tasks on a product or prototype, observing their actions, listening to their “think-aloud” commentary, and asking follow-up questions in real-time. This can be conducted in-person or remotely via video conferencing tools.

When to use it: Ideal for early-stage prototypes, complex interactions, or when needing to understand specific pain points and underlying thought processes. It’s excellent for uncovering unexpected issues and gathering rich qualitative data.

Typical participants: Generally 5-8 participants are sufficient to uncover the majority of critical usability issues (as per Nielsen Norman Group recommendations).

What data it yields: Behavioral observations, user quotes, perceived difficulties, task completion rates (qualitative assessment), task time (qualitative context), identification of specific interaction issues, mental models.

Typical tools: UserTesting.com, Lookback, Maze, Zoom, Google Meet.

- Pros:

- Allows for probing questions and clarification in real-time.

- Provides rich, contextual insights into user behavior and thought processes.

- Facilitator can adapt the test script based on participant responses.

- Excellent for identifying “unknown unknowns.”

- Cons:

- Can be time-consuming to moderate and analyze.

- Requires skilled moderators to avoid leading participants.

- Smaller sample size may not be statistically representative.

- Potential for moderator bias.

Practical Tip: Encourage participants to “think aloud” as they perform tasks. This vocalization of their thoughts, feelings, and expectations provides invaluable insight into their mental model and decision-making process, directly informing UI/UX research strategies.

What are User Interviews and How Do They Uncover Motivations?

What it is: User interviews are one-on-one conversations between a researcher and a target user, designed to explore their experiences, needs, motivations, and perceptions related to a specific domain or product. They are open-ended and exploratory in nature.

When to use it: Best for the discovery and ideation phases to understand user problems, validate needs, define personas, and gather anecdotal evidence before significant design work begins. It helps shape product research methodologies.

Typical participants: Often 8-15 interviews are conducted, but the number can vary based on the depth of insights sought and the diversity of the user segments.

What data it yields: User stories, pain points, motivations, emotional responses, feature requests, workflow descriptions, and contextual understanding.

Typical tools: Video conferencing tools (Zoom, Google Meet), note-taking software, audio recorders.

- Pros:

- Provides deep qualitative understanding of user needs and motivations.

- Flexible and adaptable to unexpected insights.

- Allows for building empathy with users.

- Can uncover latent needs that users might not articulate directly.

- Cons:

- Subject to interviewer bias.

- Time-consuming to conduct and analyze.

- Small sample size limits generalizability.

- Users may articulate “what they say” rather than “what they do.”

Practical Tip: Use open-ended questions (e.g., “Tell me about a time when…”) and active listening to encourage participants to share rich stories and avoid leading them towards predetermined answers. Focus on past behaviors rather than hypothetical future actions.

How Do Card Sorting and Tree Testing Optimize Information Architecture?

What they are:

- **Card Sorting:** A technique where users organize topics into categories that make sense to them and often label those categories. It helps uncover users’ mental models for information organization.

- **Tree Testing (Reverse Card Sort):** Users are given a task and asked to find where they would expect to locate specific content within a hierarchical menu structure (a ‘tree’). It evaluates the findability of items within an existing or proposed information architecture.

When to use them: Crucial during the information architecture and early prototyping stages to design intuitive navigation structures, menu labels, and content organization for digital products. These usability evaluation techniques are invaluable for content-heavy sites or complex applications.

Typical participants: For card sorting, 15-30 participants are typically recommended. For tree testing, 50-100 participants are often ideal for reliable data, though fewer can still yield insights.

What data they yield:

- Card Sorting: Grouping patterns, common categories, user-generated labels, dendrograms (showing clustering).

- Tree Testing: Success rates for finding items, directness (how many steps taken), first click analysis, common paths taken, areas of confusion.

Typical tools: Optimal Workshop (UserZoom), Maze, Userlytics.

- Pros:

- Directly informs the structure of navigation and content.

- Based on users’ mental models, leading to more intuitive designs.

- Can be run remotely and unmoderated, scaling efficiently.

- Reveals insights into labeling and terminology effectiveness.

- Cons:

- Card sorting can be time-consuming for participants with many cards.

- Context for individual items might be lost in card sorting.

- Tree testing doesn’t involve visual design, only the hierarchy.

- Results require careful interpretation, especially for larger datasets.

Practical Tip: For card sorting, consider a ‘hybrid’ approach where users can create their own categories but also use some predefined ones. This balances user freedom with some structural guidance, refining information architecture.

What are Key Quantitative User Testing Methods?

While qualitative methods provide depth and context, quantitative user testing approaches offer breadth and statistical significance. These techniques focus on measurable data points, allowing digital designers to quantify user behavior, identify trends, and make data-driven decisions at scale. They are particularly effective for validating specific design choices, comparing alternatives, and tracking performance over time.

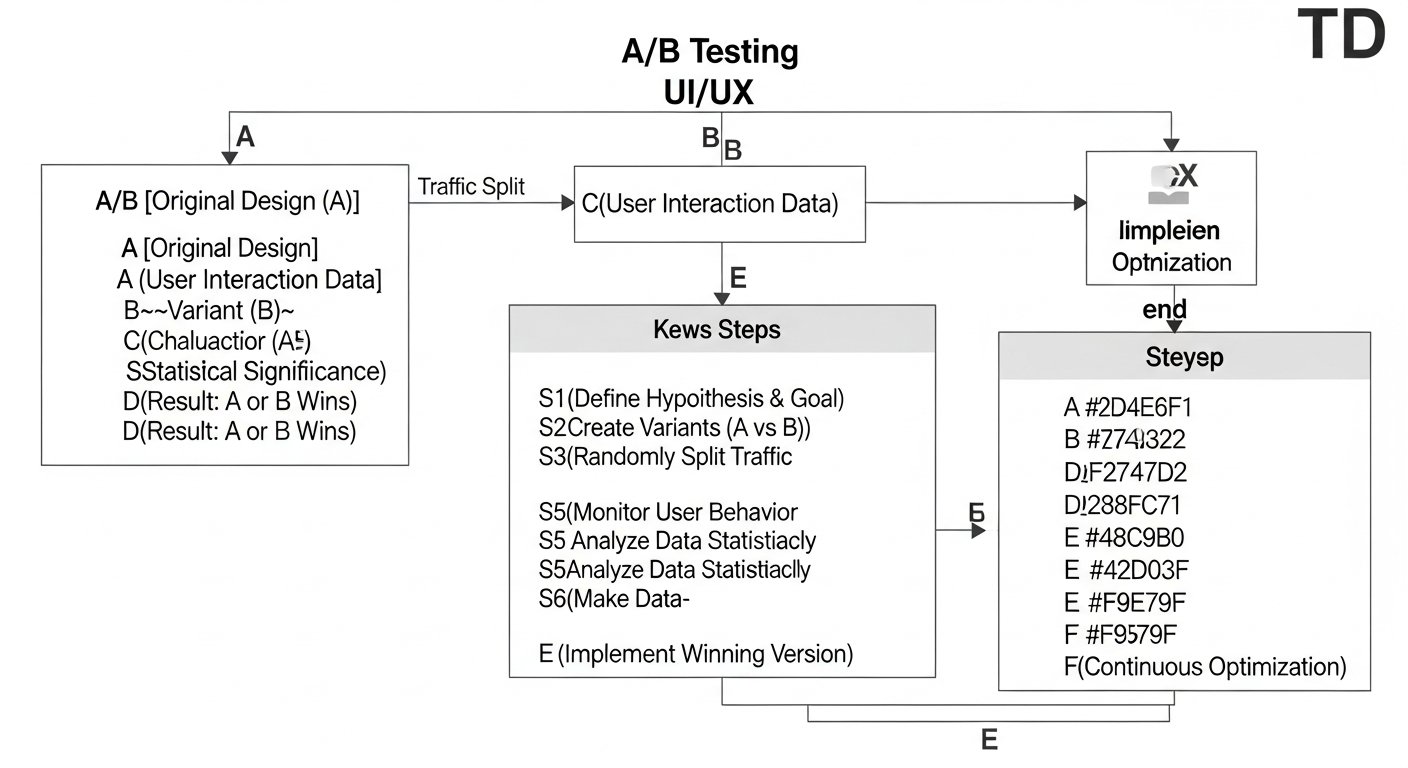

What is A/B Testing and How Does it Drive Design Decisions?

What it is: A/B testing (or split testing) involves comparing two or more versions of a webpage, app screen, or other digital element (A and B) to see which one performs better against a specific goal. Users are randomly assigned to see one version, and their interactions are measured to determine statistical significance.

When to use it: Best for optimizing existing designs, validating specific design changes (e.g., button color, headline, layout), improving conversion rates, and making data-driven product research methodologies. It’s primarily a post-launch optimization technique.

Typical participants: Hundreds to thousands of users, depending on the traffic and the desired statistical power.

What data it yields: Conversion rates, click-through rates, bounce rates, revenue per user, time on page, and other predefined metrics, with statistical significance.

Typical tools: Google Optimize (legacy, moving to Google Analytics 4), VWO, Optimizely, Adobe Target.

- Pros:

- Provides statistically significant data for design decisions.

- Minimizes risk by testing changes on a subset of users.

- Clear winner and loser, making decision-making straightforward.

- Excellent for continuous optimization and incremental improvements.

- Cons:

- Only tests one variable or a few distinct versions at a time.

- Requires significant traffic to reach statistical significance.

- Doesn’t explain “why” one version performs better, only “that” it does.

- Can be complex to set up and manage multiple concurrent tests.

Practical Tip: Focus on testing one significant variable at a time (e.g., a call-to-action button’s text or color, but not both simultaneously). This ensures that any observed differences in performance can be attributed directly to that single change, streamlining design validation strategies.

How Do Surveys and Questionnaires Gather Broad User Feedback?

What they are: Surveys and questionnaires consist of a structured set of questions designed to collect feedback, opinions, and demographic information from a large group of users. They can include various question types, such as multiple-choice, rating scales (e.g., Likert scale for System Usability Scale – SUS), and open-ended text fields.

When to use them: Ideal for gathering broad feedback on user satisfaction, perceived usability, feature prioritization, demographic data, or validating assumptions across a large user base. Useful at almost any design stage, from discovery to post-launch evaluation.

Typical participants: Tens to thousands of participants, depending on the target population and desired statistical confidence.

What data they yields: Quantitative data (percentages, averages of ratings), qualitative snippets (from open-ended questions), sentiment, demographic breakdowns, SUS scores.

Typical tools: SurveyMonkey, Typeform, Google Forms, Qualtrics, Hotjar (for on-site surveys).

- Pros:

- Efficiently gathers data from a large number of users.

- Cost-effective and scalable.

- Can be easily distributed and analyzed.

- Useful for quantifying satisfaction or specific attitudes.

- Cons:

- Users may not always provide accurate or truthful answers.

- Lack of context for responses (e.g., doesn’t show “why” they rated something poorly).

- Poorly designed questions can lead to biased or unhelpful data.

- Can suffer from low response rates.

Practical Tip: Keep surveys concise and focused. Use a mix of question types, including a few open-ended questions to provide qualitative context to quantitative ratings. Implement the System Usability Scale (SUS) for a quick, reliable measure of perceived usability.

How Do Eye-Tracking and Heatmaps Visualize User Attention?

What they are:

- **Eye-Tracking:** Uses specialized hardware or software to precisely record where users look on a screen, their gaze path, and fixation points.

- **Heatmaps:** Visual representations of user activity on a webpage or app screen, showing areas of high (hot) and low (cold) interaction. Types include click maps (where users click), scroll maps (how far they scroll), and movement maps.

When to use them: Excellent for understanding visual hierarchy, identifying overlooked elements, optimizing layout, and ensuring critical calls-to-action are visible. Ideal for visual design validation strategies, particularly in the prototyping and post-launch optimization phases.

Typical participants: For eye-tracking, a smaller group (e.g., 10-20) can provide meaningful patterns. For heatmaps, a larger volume of traffic (hundreds to thousands of users) is needed for reliable data.

What data they yields: Gaze plots, heatmaps (click, scroll, attention), areas of interest (AOI) analysis, time to first fixation, visual patterns, identification of distracting elements, comparison of digital product testing techniques.

Typical tools: Tobii Pro, Acuity Scheduling (for eye-tracking hardware), Hotjar, Crazy Egg, Fullstory (for heatmaps and session recordings).

- Pros:

- Provides objective data on actual user attention and interaction.

- Uncovers visual usability issues not easily identified otherwise.

- Excellent for optimizing visual design and content placement.

- Powerful for communicating insights to stakeholders visually.

- Cons:

- Eye-tracking hardware can be expensive and complex to set up.

- Heatmaps show “where” but not necessarily “why” users interacted (needs context).

- Can be challenging to interpret complex visual data without other methods.

- Requires significant traffic for meaningful heatmap data.

Practical Tip: Combine heatmap analysis with session recordings to understand the “why” behind click and scroll patterns. Seeing a user’s entire journey and interactions provides crucial context to the aggregated visual data, enhancing UI/UX research strategies.

How Do Essential User Testing Methods Compare?

Selecting the optimal user testing methods is a crucial decision for any digital design project. The table below provides a concise comparison of the essential approaches discussed, highlighting their key characteristics to help you make informed choices tailored to your project’s specific needs and constraints.

| Method | Type | Ideal Design Stage | Key Data Yielded | Typical Participants | Pros | Cons |

|---|---|---|---|---|---|---|

| Moderated Usability Testing | Qualitative | Ideation, Prototyping | Behavioral observations, “think-aloud” commentary, interaction issues, mental models | 5-8 |

|

|

| User Interviews | Qualitative | Discovery, Ideation | User stories, pain points, motivations, emotional responses | 8-15 |

|

|

| Card Sorting & Tree Testing | Mixed (Qualitative/Quantitative) | Information Architecture, Prototyping | Mental models, grouping patterns, success rates, navigation paths | 15-100+ |

|

|

| A/B Testing | Quantitative | Post-Launch, Optimization | Conversion rates, click-through rates, statistical significance | Hundreds-Thousands |

|

|

| Surveys & Questionnaires | Quantitative (with qualitative elements) | Discovery, Prototyping, Post-Launch | User satisfaction, perceived usability (SUS), feature prioritization, demographics | Tens-Thousands |

|

|

| Eye-Tracking & Heatmaps | Quantitative | Prototyping, Post-Launch | Gaze paths, click patterns, scroll behavior, visual attention | 10-Thousands |

|

|

How to Select the Right User Testing Method for Your Project?

Choosing the appropriate digital product testing technique is as crucial as the testing itself. It’s a strategic decision that needs to align with your project goals, resources, and the stage of your product’s lifecycle. A thoughtful selection ensures that you gather the most relevant and actionable insights for your specific challenges.

How to Match User Testing Methods to Design Stages?

The product development lifecycle offers natural breakpoints where certain usability evaluation techniques shine brightest:

- **Discovery Stage:** At the very beginning, when defining the problem and understanding user needs.

- **Ideal Methods:** User Interviews, Surveys (for broad needs assessment), Contextual Inquiry (observing users in their natural environment).

- **Goal:** Uncover pain points, validate problem existence, build empathy, define user personas.

- **Ideation Stage:** When generating and refining initial concepts and information architecture.

- **Ideal Methods:** Card Sorting, Tree Testing, Low-Fidelity Usability Testing (with paper prototypes or wireframes).

- **Goal:** Structure information logically, validate core concepts, test navigation pathways.

- **Prototyping Stage:** As you develop more detailed designs and interactive prototypes.

- **Ideal Methods:** Moderated and Unmoderated Usability Testing, Eye-Tracking (for visual elements), First Click Testing.

- **Goal:** Identify usability issues, test specific flows, gather feedback on interaction design and UI elements.

- **Post-Launch & Optimization Stage:** After the product is live, focusing on continuous improvement and performance.

- **Ideal Methods:** A/B Testing, Surveys (for satisfaction), Heatmaps, Analytics Review, Session Recordings, Remote Unmoderated Usability Testing.

- **Goal:** Optimize conversion rates, track satisfaction, identify areas for improvement, validate new features.

What Factors Should You Consider When Selecting a User Testing Method?

Practical constraints heavily influence the feasibility of different product research methodologies:

- **Budget:** Some methods, like in-person moderated usability testing or eye-tracking hardware, can be costly. Remote unmoderated tests or online surveys are often more budget-friendly. Consider the cost of recruitment, tools, incentives, and analyst time.

- **Time:** If you need quick insights, shorter surveys, rapid unmoderated testing, or small-scale interviews might be more appropriate. Comprehensive moderated studies or large A/B tests require more time for setup, execution, and analysis.

- **Participant Availability:** Recruiting specific user segments (e.g., highly specialized professionals) can be challenging and time-consuming. Methods requiring fewer participants (like moderated usability testing) or those allowing for broader recruitment (like surveys) might be more practical depending on your target audience.

- **Skill Set:** Some design validation strategies (e.g., advanced statistical analysis for A/B tests or nuanced moderation for interviews) require specialized skills. Ensure your team has the expertise or access to it.

What are the Best Practices for Implementing User Testing Methods?

Effective implementation of UX testing approaches goes beyond merely selecting a method; it involves meticulous planning, ethical considerations, and a commitment to iterative improvement. Adhering to best practices ensures that the data collected is reliable, actionable, and truly beneficial to the digital design process.

- **Define Clear Objectives:** Before selecting any method, explicitly state what questions you want to answer and what outcomes you hope to achieve. Vague objectives lead to unfocused testing and inconclusive results.

- **Recruit Representative Users:** Ensure your participants accurately reflect your target audience. Biased or unrepresentative participants will yield misleading data. Use screening questions to qualify participants.

- **Craft Unbiased Tasks & Questions:** Avoid leading questions or tasks that give away the “correct” answer. Tasks should be realistic scenarios that users would encounter in real life.

- **Conduct Pilot Tests:** Always run a small pilot test with internal team members or a few non-representative users. This helps identify flaws in your script, task design, or technical setup before involving actual participants.

- **Prioritize Ethical Considerations:** Obtain informed consent, ensure data privacy, and clearly communicate the purpose of the test. Make participants feel comfortable and respected.

- **Observe, Don’t Intervene (Unless Moderated):** In unmoderated tests, let users struggle. In moderated tests, guide subtly and ask open-ended questions. Avoid helping users unless absolutely necessary.

- **Document & Analyze Systematically:** Record observations, user quotes, and quantitative metrics consistently. Use frameworks like affinity mapping for qualitative data and statistical analysis for quantitative data.

- **Iterate Based on Findings:** The goal is not just to find problems but to fix them. Translate insights into actionable design changes and re-test to validate improvements. User testing is an iterative process.

- **Communicate Insights Effectively:** Present findings clearly and concisely to stakeholders, highlighting key issues, recommendations, and their potential impact on the product and user experience.

- **Combine Methods for Holistic Understanding:** Don’t rely on a single method. Blend qualitative insights with quantitative data for a comprehensive understanding of user behavior and motivations.

What are Common Mistakes in User Testing and How Can They Be Avoided?

Even seasoned UX professionals can fall into common pitfalls when conducting user research. Being aware of these missteps is the first step toward improving the quality and impact of your digital product testing techniques.

- **Testing Too Late in the Cycle:** Waiting until development is nearly complete to test makes changes costly and difficult. Integrate testing early and often, even with low-fidelity prototypes.

- **Recruiting Non-Representative Users:** Testing with “anyone available” instead of your actual target audience provides irrelevant or misleading feedback. Invest time in proper participant screening.

- **Asking Leading Questions:** Questions like “Don’t you agree this feature is intuitive?” bias participants towards a positive response. Instead, ask “What are your initial thoughts on this feature?”

- **Not Defining Clear Objectives:** Starting a test without specific questions to answer means you won’t know what data to collect or how to interpret it. Clarify your goals upfront.

- **Focusing Only on “What” Without “Why”:** Quantitative data tells you what happened (e.g., low completion rate), but qualitative methods are needed to understand why it happened. Combine methods for deeper insights.

- **Ignoring Negative Results:** Sometimes tests show that a design doesn’t work, or a feature isn’t desired. Embrace these findings; they prevent costly development of flawed solutions.

- **Over-relying on a Single Method:** No single user testing method provides a complete picture. Use a mix of qualitative and quantitative techniques to triangulate your findings and build a robust understanding.

- **Failure to Iteratively Test:** Conducting one test and assuming all problems are solved is a mistake. Design is iterative; so is user testing. Re-test after making significant changes.

- **Not Addressing Researcher Bias:** Your own expectations or interpretations can skew results. Use clear protocols, peer review, and multiple observers to mitigate bias.

- **Over-Analyzing or Under-Analyzing Data:** Getting lost in too much data without deriving actionable insights, or conversely, making major decisions based on too little data. Focus on patterns and prioritize critical findings.

How Do User Testing Methods Evolve with Emerging Technologies?

The landscape of user testing is continually shaped by advancements in technology, offering exciting new possibilities for Digital Design & Creative Professions. These evolutions enhance the speed, scale, and depth of insights achievable through various UX testing approaches.

- **AI-Powered Analytics:** Artificial intelligence and machine learning are transforming how user data is analyzed. AI can quickly identify patterns in qualitative data (e.g., sentiment analysis of open-ended survey responses or transcriptions), automate report generation, and even predict user behavior based on past interactions. This significantly speeds up the analysis phase, allowing designers to focus more on strategic application of insights.

- **Virtual and Augmented Reality Testing:** As VR/AR experiences become more prevalent, specialized testing methods are emerging. Designers can now evaluate interfaces and interactions within virtual environments, testing spatial computing, gesture controls, and immersive experiences in a controlled yet realistic setting. Tools that simulate these environments or allow for in-situ testing are becoming more sophisticated.

- **Advanced Remote Testing Platforms:** The shift towards remote work has accelerated the development of highly capable remote unmoderated and moderated testing platforms. These tools offer enhanced features for participant recruitment, task assignment, real-time observation, recording, and automated analysis, making global user research more accessible and efficient.

- **Biometric and Physiological Measures:** Beyond eye-tracking, technologies like facial expression analysis, skin conductance (EDA), and heart rate monitoring are being explored to capture users’ emotional and cognitive responses during interaction. While still largely in specialized research, these biometric data points offer a deeper layer of insight into subconscious user reactions.

- **Integrated Analytics & Experimentation Platforms:** Modern product analytics platforms are increasingly integrating A/B testing, feature flagging, and user feedback mechanisms directly into their ecosystems. This allows for seamless experimentation and continuous optimization, linking user behavior data directly to product changes.

- **Synthetic Users and Simulations:** While controversial, research into “synthetic users” – AI-driven agents that can simulate human behavior based on learned patterns – is beginning to emerge. This could potentially allow for preliminary testing of designs without actual human participants, though human validation will always remain crucial.

These evolving technologies underscore the dynamic nature of user experience research, continually providing new tools and techniques for digital design and creative professions to validate, refine, and innovate their offerings.

Conclusion: How to Master User Testing for Superior Digital Experiences

In the competitive landscape of digital products, the ability to consistently deliver intuitive, engaging, and effective user experiences is paramount. Mastering user testing methods is not merely a skill; it is a fundamental pillar of modern UI/UX design and a strategic differentiator for Digital Design & Creative Professions. By embracing both qualitative and quantitative approaches, meticulously planning each study, and continuously iterating based on user feedback, teams can transform assumptions into validated insights and create products that truly resonate with their audience.

The journey through various UX testing approaches—from the deep dives of moderated usability testing and user interviews to the broad insights of surveys and the precision of A/B tests—equips designers with an invaluable toolkit. This commitment to understanding real users, identifying their pain points, and validating solutions is what elevates good design to great design, ensuring that every digital experience is crafted with intention, empathy, and demonstrable impact.

For a deeper understanding of the foundational principles that underpin these strategies, explore our comprehensive guide on UI/UX Design Fundamentals.

Sources & References

- Nielsen, J., & Norman, D. A. (2000). The Design of Everyday Things: Revised and Expanded Edition. Basic Books. (Considered a foundational text for usability principles).

- Rubin, J., & Chisnell, D. (2008). Handbook of Usability Testing: How to Plan, Design, and Conduct Effective Tests (3rd ed.). John Wiley & Sons.

- Sauro, J., & Lewis, J. R. (2016). Quantifying the User Experience: Practical Statistics for User Research (2nd ed.). Morgan Kaufmann.

- UserTesting. (2023). The Ultimate Guide to User Testing. Retrieved from UserTesting.com

- Nielsen Norman Group. (2023). Articles & Reports on UX Research. Retrieved from NNGroup.com

About the Author

Jian Li, Creative Lead & UI/UX Strategist — I thrive on exploring the intersection of technology, art, and human behavior to create impactful digital products. With a Certified Usability Analyst (CUA) designation and an M.A. in Interaction Design, my passion lies in translating complex user needs into elegant, intuitive, and delightful user experiences. I advocate for integrating robust user testing methodologies into every stage of the design process, believing that user-centered design is the cornerstone of successful innovation.

Reviewed by Maya Singh, Senior Content Editor & UX Strategist — Last reviewed: October 27, 2023

Reviewed by Maya Singh, Senior Content Editor & UX Strategist — Last reviewed: March 28, 2026